Comparison among various Black Box or Functional Software Testing Techniques

Testing Effort

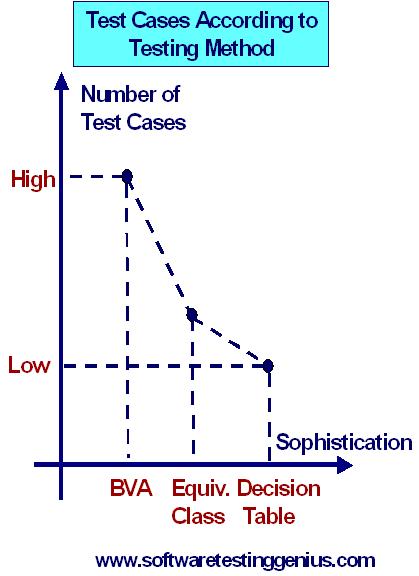

The functional methods vary both in terms of the number of test cases generated and the effort to develop these test cases. To compare the three techniques, namely, boundary value analysis (BVA), equivalence class partitioning and decision table based technique let us focus our attention on the following graph.

The domain-based techniques have no recognition of data or logical dependencies. They are very mechanical in the way they generate test cases. Because of this, they are also easy to automate. The techniques like equivalence class testing focus on data dependencies and thus we need to demonstrate our skill. The thinking goes into the identification of the equivalence classes and after that, the process is mechanical. It may be noted from the graph, that the decision table based technique is most sophisticated because it requires the tester to consider both data and logical dependencies. It may be seen that once we get a good and healthy set of conditions, the resulting test cases are complete and minimal.

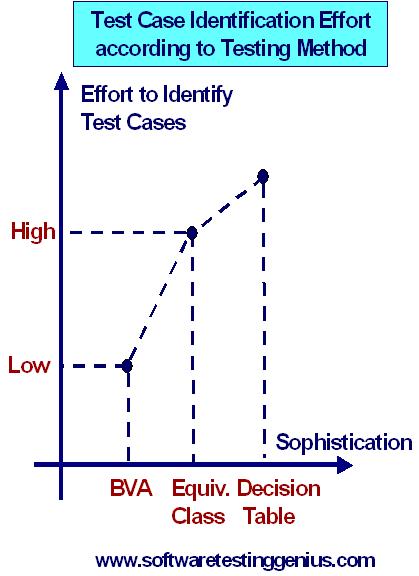

Now, consider another graph to show the effort required to identify the test cases versus its sophistication.

Thus we can say that the effort required to identify test cases is lowest in BVA and highest in decision tables. The end result is a trade-off between the test case effort identification and test case execution effort. If we shift our effort toward more sophisticated testing methods, we reduce our test execution time. This is very important as tests are usually executed several times. It may be noted that, judging testing quality in terms of the sheer number of test cases has drawbacks similar to judging programming productivity in terms of lines of code.

Effect of various Testing Stretegies on Testing Efficiency:

It is evident from all the abobve mentioned functional testing strategies that either the functionality is untested or the test cases are redundant. So, gaps do occur in functional test cases and these gaps are reduced by using more sophisticated techniques.

We can develop various ratios of total number of test cases generated by method-A to those generated by method-B or even ratios on a test case basis. This is more difficult but sometimes the management may demand numbers even when they have little real meaning. When we see several test cases with the same purpose, sense redundancy, detecting the gaps is quite difficult. If we use only functional testing, the best we can do is to compare the test cases that result from two methods. In general, the more sophisticated method will help us recognize gaps but nothing is guaranted.

How to measure Testing Effectiveness of these Testing Techniques:

The easiest choice is

1) Be Dogmatic: We can select a method, use it to generate test cases and then run the test cases. We can improve on this by not being dogmatic and allowing the tester to choose the most appropriate method. We can gain another incremental improvement by devising appropriate hybrid methods.

2) The second choice can be the structural testing techniques for the test effectiveness.

It may be noted that the best interpretation for testing effectiveness is most difficult. We would like to know how effective a set of test cases is for finding faults present in a program. This is problematic due to the following two reasons.

1) It presumes that we are aware of the faults in a program.

2) Proving that a program is fault-free is equivalent to the famous halting problem of computer science, which is known to be impossible.

Then What is the Best Solution ?

The best solution is to work backward from fault types. Given a particular kind of fault, we can choose testing methods (functional and structural) that are likely to reveal faults of that type. If we couple this with knowledge of the most likely kinds of faults, we end up with a pragamatic approach to testing effectiveness. This is improved if we track the kinds of faults and their frequencies in the software we develop.

Key Guidelines for the Functional Testing

1) If the variables refer to physical quantities then domain testing and equivalence class testing should be used.

2) If the variables are independent then domain testing and equivalence class testing should be used.

3) If the variables are dependent, decision table testing should be practiced.

4) If the single-fault assumption is warranted then BVA and robustness testing should be used.

5) If the multiple-fault assumption is warranted then worst case testing, robust worst case testing and decision table based testing should be practiced.

6) If the program has significant exception handling, robustness testing and decision table based testing are identical.

7) If the variable refers to logical quantities, equivalence class testing and decision table testing should be practiced.

Many More Articles & Tutorials on Black Box Testing

An expert on R&D, Online Training and Publishing. He is M.Tech. (Honours) and is a part of the STG team since inception.