Understanding the ABC of Software Product Quality Assurance

It is not possible to test quality into a product when the development is close to being finished.

As asserted by many renowned QA Experts & Test Managers, the quality assurance activities must start early and become an integrated part of the entire development project and the mindset of all stakeholders.

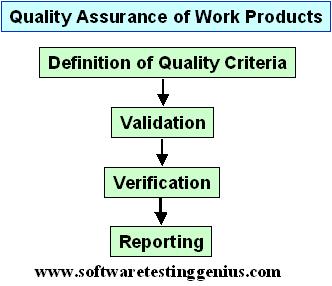

Quality assurance comprises following four activities:

1) Definition of quality criteria

2) Validation

3) Verification

4) Quality reporting

It may be borne in mind that the validation is not necesarily performed before the verification; in many organizations it is the other way around, or in parallel.

First of all, the Quality criteria must be defined. These criteria are the expression of the quality level that must be reached or an expression of “what is sufficiently good.”

These criteria can be very different from product to product. They depend on the business needs and the product type. Different quality criteria will be set for a product that will just

be thrown away when it is not working than for a product that is expected to work for many years with a great risk of serious consequences if it does not work.

Following are the two quality assurance activities for checking if the quality criteria have been met by the object under testing:

1) Validation;

2) Verification.

Both of them have different goals and different techniques. The object to test is delivered for validation and verification from the applicable development process.

Validation is the assessment of the correctness of the product (the object) in relation to the users needs and requirements. We can also say that validation answers the question: “Are we building the correct product?”

Validation must determine if the customer�s needs and requirements are correctly captured and correctly expressed and understood. We must also determine if what is delivered reflects these needs and requirements.

When the requirements have been agreed upon and approved, we must ensure that during the entire development life cycle:

1) Nothing has been forgotten.

2) Nothing has been added.

It is obvious that if something is forgotten, the correct product has not been delivered. Is does, however, happen all too often, that requirements are overlooked somewhere in the development process. This costs money, time, and credibility.

On the surface it is perhaps not so bad if something has been added. But it does cost money and affect the project plan, when a developer – probably in all goodwill – adds some functionality, which he or she imagines would be a benefit for the end user.

What is worse is that the extra functionality will probably never be tested in the system and acceptance test, simply because the testers don�t know anything about its existence. This means that the product is sent out to the customers with some untested functionality and this will lie as a mine under the surface of the product. Maybe it will never be hit, or maybe it will be hit, and in that case the consequences are unforeseeable.

The possibility that the extra functionality will never be hit is, however, rather high, since the end-user will probably not know about it anyway.

Validation during the development process is performed by analysis of trace information. If requirements are traced to design and code it is an easy task to find out if some requirements are not fulfilled, or if some design or code is not based on requirements.

The ultimate validation is the user acceptance test, where the users test that the original requirements are implemented and that the product fulfills its purpose.

Verification, the other quality assurance activity, is the assessment of whether the object fulfills the specified requirements.

Verification answers the question: “Are we building the product correctly?”

The difference between validation and verification can be illustrated like:

a) Validation confirms that a required calculation of discount has been designed and coded in the product.

b) Verification confirms that the implemented algorithm calculates the discount as it is supposed to in all details.

We have several techniques for verification. The ones to choose depend on the test object. In the early phases the test object is usually a document, for example in the form of:

1) Plans;

2) Requirements specification;

3) Design;

4) Test specifications;

5) Code.

The verification techniques for these are the static test techniques like:

1) Inspection;

2) Review (informal, peer, technical, and management);

3) Walkthrough.

Once some code has been produced, we can use static analysis on the code as a verification technique. This is not executing the code, but verifying that it is written according to coding standards and that is does not have obvious data flow faults. Finally, dynamic testing where the test object is executable software can be used.

We can also use dynamic analysis, especially during component testing. This technique reveals faults that are otherwise very difficult to identify.

Quality assurance reports on the findings and results should be produced. If the test object is not found to live up to the quality criteria, the object is returned to development for correction. At the same time incident reports should be filled in and given to the right authority.

Once the test object has passed the validation and verification, it should be placed under configuration management.

Full Study Material for ISTQB Advanced Test Manager Exam

An expert on R&D, Online Training and Publishing. He is M.Tech. (Honours) and is a part of the STG team since inception.

Can u please send me that material and ppt file on himanshi2007@gmail.com.

Thanks