Simple explanation of Hierarchy of Testing levels

Testing is usually relied upon to detect the faults remaining from earlier stages, in addition to the faults introduced during coding itself. Due to this, different levels of testing are used in the testing process; each level of testing aims to test different aspects of the system.

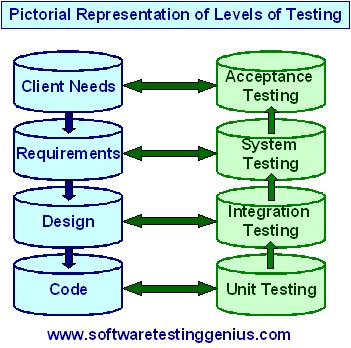

Different hierarchical levels of testing are

1) Unit testing,

2) Integration testing,

3) System testing, and

4) Acceptance testing

These different levels of testing attempt to detect different types of faults.

The relation of the faults introduced in different phases, and the different levels of testing are shown in the following Figure.

1) Unit testing: The first level of testing is called

unit testing. Unit testing is essentially for verification of the code produced by individual programmers, and is typically done by the programmer of the module. Generally, a module is offered by a programmer for integration and used by others only after it has been unit tested satisfactorily.

2) Integration testing: The next level of testing is often called integration testing. In this, many modules being unit-tested are combined together into different subsystems, which are then tested. The goal here is to see if the modules can be integrated properly. Hence, the emphasis is on testing interfaces between modules. This testing activity can be considered testing the design.

The next levels are

3) System testing and 4) Acceptance testing.

Here the entire software system is tested. The reference document for this process is the requirements document, and the goal is to see if the software meets its requirements. This is often a large exercise, which for large projects may last many weeks or months. This is essentially a validation exercise, and in many situations it is the only validation activity.

Acceptance testing is often performed with realistic data of the client to demonstrate that the software is working satisfactorily. It may be done in the setting in which the software is to eventually function. Acceptance testing essentially tests if the system satisfactorily solves the problems for which it was commissioned.

These levels of testing are performed when a system is being built from the components that have been coded.

There is another level of testing, called regression testing that is performed when some changes are made to an existing system. We know that changes are fundamental to software; any software must undergo changes. However, when modifications are made to an existing system, testing also has to be done to make sure that the modification has not had any undesired side effect of making some of the earlier services faulty. That is, besides ensuring the desired behavior of the new services, testing has to ensure that the desired behavior of the old services is maintained. This is the task of regression testing.

For regression testing, some test cases that have been executed on the old system are maintained, along with the output produced by the old system. These test cases are executed again on the modified system and its output compared with the earlier output to make sure that the system is working as before on these test cases. This frequently is a major task when modifications are to be made to existing systems.

Complete regression testing of large systems can take a considerable amount of time, even if automation is used. If a small change is made to the system, often practical considerations require that the entire test suite not be executed, but regression testing be done with only a subset of test cases. This requires suitably selecting test cases from the suite, which can test those parts of the system that could be affected by the change. Test case selection for regression testing is an active research area and the experts have proposed many different approaches.

Many More Articles on Software Testing Approaches

An expert on R&D, Online Training and Publishing. He is M.Tech. (Honours) and is a part of the STG team since inception.

This are the levels of “boring” testing though.

You need people who will be motivated and this is not efficient nor motivating.