Practical Approach for choosing Effective Test Cases

Every tester begins his testing effort by building test cases. Test case creation is not a simple task as it appears. It is an art � rather a complex art. This task is fairly complex due to the following reasons:

1) Different varieties of test cases are required for different categories or classes of information.

2) All test cases within a particular test suite may or may not be good. Some test cases may be good & effective in many ways.

3) Different testers design their test cases as per a particular style of testing, for example risk-based testing or it may be domain testing. It is well known that good risk-based test cases are quite different from good domain based test cases.

Brian Marick coins a new term for test cases, which are documented lightly. He calls them “A test idea”. According

to Brian, “A test idea is nothing but a short statement about something which is required to be tested.” For example, if we are testing a square-root function, one test idea can be “test a number having value lower than zero”. The motive behind this philosophy is verify as to whether the code is able to handle an error case or not.For any type of software application, we can create huge number of test cases. But due to constraint of time and money we are not able to execute all of them & restrict our testing effort to few of them only. There is no hard & fast rule or any international standard available with us, which could lay down some selection criterion for the test cases. Generally we restrict our testing to confirm the satisfactory performance of our program as per the specifications & hesitate to go beyond that. Thus the test cases are designed & executed to ensure the program�s functionality within the scope of specifications. Hence it is very difficult to conclude that our program shall be able to perform according to the specifications for all possible combinations of test cases. For a simple example of a function of a leap year, we can have huge number of likely inputs for testing.

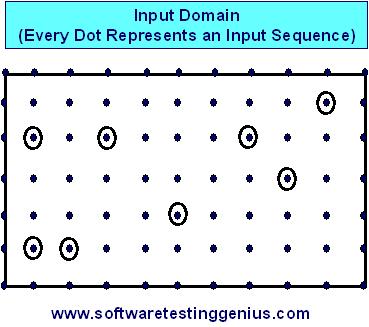

Consider the input domain described in the following figure.

Each dot represents an input sequence. There are millions of possible input sequences. We want to select only few of them. Some of the selected inputs are shown in circles. If the program works correctly on randomly selected input sequences (shown as circles), there is still a very high probability of the program’s failure on other millions of cases, which are not tested.

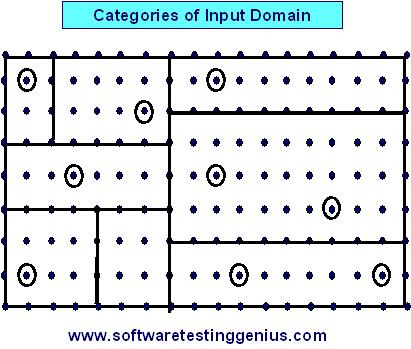

If there are too many cases to test individually and testing a random Subset from them is not justifiable, then the solution is that the tester should be systematic. He should try to categorize the likely inputs and one representative test case from every category as shown in following figure.

If a representative is well selected, it will be reasonable to assume that if the program works correctly on one representative test case of a category, it would work correctly on all test cases within that category. Hence we must design these categories of input or output domain based upon some logic. The related test cases should be combined in a particular category. This will reduce the quantity of test cases without reducing the confidence in the program. However, design of categories, not only require domain knowledge but also experience in testing. Finally we can conclude that, no matter, how hard we try, how much time, we spend and how many staff and computers we use, we won�t be able to do the enough testing.

We can still miss some bugs due to the following reasons

1) Presence of large domain of likely inputs for testing.

2) Presence of several likely paths across which we could test the program.

3) Presence of user interface related complex issues: There remain many issues related to the user interface, thereby lot many design issues crop up which would call for testing.

For example, if specification states that year 2004 is not a leap year and program gives the same output, that is, 2004 is not a leap year (instead of showing it is a leap year) then one may say that there is no bug. The program is meeting the requirements of the customer as stated by him. But we know that year 2004 is a leap year. The subject instance is extremely simple wherein we are aware of the correct answer. However situations can arise wherein a ready answer may not be available with us & the answer may not be so simple. In such a situation we should at least aim for the execution of every line of program once during testing.

For a very large program, 100% code coverage is very difficult. Some tools are available to show the extent of coverage, but their applicability is very limited. The selection of test cases is very interesting area of study, research and practice. Many techniques are available for selection, prioritization and minimization of test cases.

4) Inadequate or poor planning of testing efforts.

5) Adoption of poor testing methodology.

6) Inadequate understanding the role of testing.

7) Deployment of incorrect people for testing.

Many More Articles on Basics of Testing

An expert on R&D, Online Training and Publishing. He is M.Tech. (Honours) and is a part of the STG team since inception.