ISTQB Foundation Level Exam Crash Course Part-3

This is Part 3 of 35 containing 5 Questions (Q. 11 to 15) with detailed explanation as expected in ISTQB Foundation Level Exam Latest Syllabus updated in 2011

Deep study of these 175 questions shall be of great help in getting success in ISTQB Foundation Level Exam

Q. 11: What is Unit or Component Testing?

Before testing of the code can start, clearly the code has to be written. This is shown at the bottom of the V-model. Generally, the code is written in component parts, or units. The units are usually constructed in isolation, for integration at a later stage. Units are also called programs, modules or components.

Unit testing is intended to ensure that the code written for the unit meets its specification, prior to its integration with other

units.In addition to checking conformance to the program specification, unit testing would also verify that all of the code that has been written for the unit can be executed. Instead of using the specification to decide on inputs and expected outputs, the developer would use the code that has been written for this.

The test bases for unit testing can include:

1) The component requirements

2) The detailed design

3) The code itself

Unit testing requires access to the code being tested. Thus test objects (i.e. what is under test) can be the components, the programs, data conversion/migration programs and database modules. Unit testing is often supported by a unit test framework. In addition, debugging tools are often used.

An approach to unit testing is called Test Driven Development. As its name suggests, test cases are written first, code built, tested and changed until the unit passes its tests. This is an iterative approach to unit testing.

Unit testing is usually performed by the developer who wrote the code (and who may also have written the program specification). Defects found and fixed during unit testing are often not recorded.

<<<<<< =================== >>>>>>

Q. 12: What is Integration Testing?

Once the units have been written, the next stage would be to put them together to create the system. This is called integration. It involves building something large from a number of smaller pieces.

The purpose of integration testing is to expose defects in the interfaces and in the interactions between integrated components or systems.

The test bases for integration testing can include:

1) The software and system design

2) A diagram of the system architecture

3) Workflows and use-cases

The test objects would essentially be the interface code. This can include subsystems’ database implementations.

Before integration testing can be planned, an integration strategy is required. This involves making decisions on how the system will be put together prior to testing.

There are three commonly quoted integration strategies, namely:

1) Big-Bang Integration

2) Top-Down Integration

3) Bottom-Up Integration

<<<<<< =================== >>>>>>

Q. 13: How would you explain the different Integration Testing Strategies?

1) Big-Bang Integration: This is where all units are linked at once, resulting in a complete system. When testing of this system is conducted, it is difficult to isolate any errors found, because attention is not paid to verifying the interfaces across individual units.

This type of integration is generally regarded as a poor choice of integration strategy. It introduces the risk that problems may be discovered late in the project, where they are more expensive to fix.

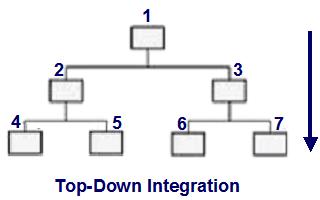

2) Top-Down Integration: This is where the system is built in stages, starting with components that call other components. Components that call others are usually placed above those that are called. Top-down integration testing will permit the tester to evaluate component interfaces, starting with those at the �top� as shown in the following figure.

The control structure of a program can be represented in a chart. In the above figure, component 1 can call components 2 and 3. Thus in the structure, component 1 is placed above components 2 and 3. Component 2 can call components 4 and 5. Component 3 can call components 6 and 7. Thus in the structure, components 2 and 3 are placed above components 4 and 5 and components 6 and 7, respectively.

In this chart, the order of integration might be:

1,2 or 1,3 or 2,4 or 2,5 or 3,6 or 3,7

Top-down integration testing requires that the interactions of each component must be tested when it is built. Those lower down in the hierarchy may not have been built or integrated yet. In the above figure, in order to test component 1’s interaction with component 2, it may be necessary to replace component 2 with a substitute since component 2 may not have been integrated yet.

This is done by creating a skeletal implementation of the component, called a stub. A stub is a passive component, called by other components. In this example, stubs may be used to replace components 4 and 5, when testing component 2.

The use of stubs is commonplace in top-down integration, replacing components not yet integrated.

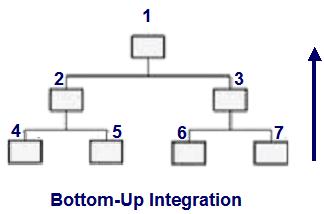

3) Bottom-up Integration: This is the opposite of top-down integration and the components are integrated in a bottom-up order as shown in following figure.

The integration order might be:

4,2 or 5,2 or 6,3 or 7,3 or 2,1 or 3,1

Hence, in bottom-up integration, components 4-7 would be integrated before components 2 and 3. In this case, the components that may not be in place are those that actively calls other components. As in top-down integration testing, they must be replaced by specially written components. When these special components call other components, they are called drivers. They are so called because, in the functioning program, they are active, controlling other components.

Components 2 and 3 could be replaced by drivers when testing components 4 – 7. They are generally more complex than stubs.

There may be more than one level of integration testing. For example:

Component integration testing focuses on the interactions between software components and is done after component (unit) testing. Developers usually carry out this type of integration testing.

System integration testing focuses on the interactions between different systems and may be done after system testing of each individual system. For example, a trading system in an investment bank will interact with the stock exchange to get the latest prices for its stocks and shares on the international market. Testers usually carry out this type of integration testing.

It should be noted that testing at system integration level carries extra elements of risk. These can include: at a technical level, cross-platform issues; at an operational level, business workflow issues; and at a business level, risks associated with ownership of regression issues associated with change in one system possibly having a knock-on effect on other systems.

<<<<<< =================== >>>>>>

Q. 14: What is System Testing?

Having checked that the components all work together at unit level, the next step is to consider the functionality from an end-to-end perspective. This activity is called system testing.

System testing is necessary because many of the criteria for test selection at unit and integration testing result in the production of a set of test cases that are unrepresentative of the operating conditions in the live environment. Thus testing at these levels is unlikely to reveal errors due to interactions across the whole system, or those due to environmental issues.

System testing serves to correct this imbalance by focusing on the behavior of the whole system/product as defined by the scope of a development project or program, in a representative live environment. A team that is independent of the development process usually carries it out. The benefit of this independence is that an objective assessment of the system can be made, based on the specifications as written, and not the code.

In the V-model, the behavior required of the system is documented in the functional specification. It defines what must be built to meet the requirements of the system. The functional specification should contain definitions of both the functional and non-functional requirements of the system.

A functional requirement is a requirement that specifies a function that a system or system component must perform. Functional requirements can be specific to a system. For instance, you would expect to be able to search for flights on a travel agent’s website, whereas you would visit your online bank to check that you have sufficient funds to pay for the flight.

Thus functional requirements provide detail on what the application being developed will do.

Non-functional system testing looks at those aspects that are important but not directly related to what functions the system performs. These tend to be generic requirements, which can be applied to many different systems. In the example above, you can expect that both systems will respond to your inputs in a reasonable time frame, for instance. Typically, these requirements will consider both normal operations and behavior under exceptional circumstances.

The amount of testing required at system testing, however, can be influenced by the amount of testing carried out (if any) at the previous stages. In addition, the amount of testing advisable would also depend on the amount of verification carried out on the requirements.

Test bases for system testing can include:

1) System and software requirement specifications

2) Use cases

3) Functional specifications

4) Risk analysis reports

5) System, user and operation manuals

The test object will generally be the system under test.

<<<<<< =================== >>>>>>

Q. 15: Describe some examples of non-functional requirements that details the performance of the application

Some of the non-functional requirements are:

1) Installability: Installation procedures.

2) Maintainability: Ability to introduce changes to the system.

3) Performance: Expected normal behavior.

4) Load handling: Behavior of the system under increasing load.

5) Stress handling: Behavior at the upper limits of system capability.

6) Portability: Use on different operating platforms.

7) Recovery: Recovery procedures on failure.

8) Reliability: Ability of the software to perform its required functions over time.

9) Usability: Ease with which users can engage with the system.

Part – 4 of the Crash Course – ISTQB Foundation Exam

Access The Full Database of Crash Course Questions for ISTQB Foundation Level Certification

An expert on R&D, Online Training and Publishing. He is M.Tech. (Honours) and is a part of the STG team since inception.

Thanks a lot.