System Test Automation Primer-A Must Read for the Test Managers

It is essential for any software testing organization to be more efficient especially in test automation. The reason being automation is a strategic business activity, requiring sound support from the senior management; without which it can doom due to the lack of adequate funds & other resources. Automation is aligned with the business mission and goals and a desire to speed up delivery of the system to the market without compromising its quality. Automation remains a long-term investment and is an on-going process. Results can’t be realized overnight; expectation need to be managed to ensure that it is realistically achievable within a certain time period.

Few best reasons for system test automation are:

1) Better productivity of software testing engineers

2) Better coverage of regression testing

3) Better reusability of test cases

4) Better consistency in testing

5) Reduction of test intervals

6) Reduction in cost of software maintenance

7) Better effectiveness of tests

Software testing managers consider following prerequisites while assessing their

organization, if it is ready for test automation or not;1) The system is stable and its functionalities are well defined.

2) The test cases to be automated do not have any ambiguities.

3) The software testing tools & infrastructure are available.

4) The test automation engineers have adequate experience of automation.

5) Sufficient budget has been allocated for the software tools.

The system must be stable enough for automation to be meaningful. If the system is constantly changing or frequently crashing, the maintenance cost of the automated test suite will be rather high to keep the test cases up to date with the system. Test automation will not succeed unless detailed test procedures are in place. It is very difficult to automate a test case, which is not well defined for manual execution. If the tests are executed in an ad hoc manner without developing the test objectives, detailed test procedure, and pass – fail criteria, then they are not ready for automation.

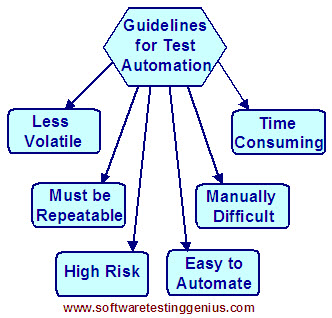

Test selection guidelines for automation:

Test cases are automated only if there is a clear economic benefit over manual execution. Some test cases are easy to automate while others are more troublesome.

General guideline shown above can be used in evaluating the suitability of test cases to be automated are;

1) Must be less volatile: A test case is stable and is unlikely to change over time. The test case should have been executed manually before. It is expected that the test steps and the pass-fail criteria are not likely to change any more.

2) Must be repeatable: Test cases that are going to be executed several times should be automated. However, one-time test cases should not be considered for automation. Poorly designed test cases, which tend to be difficult to reuse, are not economical for automation.

3) Must have high risk associated with it: High-risk test cases are those that are routinely rerun after every new software build. The objectives of these test cases are so important that one cannot afford to not re-execute them. In some cases the propensity of the test cases to break is very high. These test cases are likely to be fruitful in the long run and are the right candidates for automation.

4) Must be easy to automate: Test cases that are easy to automate using automation tools should be automated. Some features of the system are easier to test than other features, based on the characteristics of a particular tool. Custom objects with graphic and sound features are likely to be more expensive to automate.

5) Must be manually difficult to execute: Test cases that are very hard to execute manually should be automated. Manual test executions are a big problem, for example, causing eye strain from having to look at too many screens for too long in a GUI test. It is strenuous to look at transient results in real-time applications. These nasty, unpleasant test cases are good candidates for automation.

6) Must be boring & time consuming: Test cases that are repetitive in nature and need to be executed for longer periods of time should be automated. The tester’s time should be utilized in the development of more creative and effective test cases.

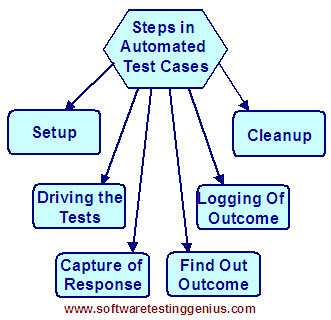

Typical structure of an automated test case:

An automated test case is a replica of the actions of a human tester in terms of creating initial conditions to execute the test, entering the input data to drive the test, capturing the output, evaluating the result, and finally restoring the system back to its original state.

The six major steps in an automated test case are shown in the picture below. Error handling routines are incorporated in each step to increase the maintainability and stability of test cases.

1) Setting up the test:

The setup includes steps to check the hardware, network environment, software configuration, and that the SUT is running. In addition, all the parameters of the SUT that are specific to the test case are configured. Other variables pertinent to the test case are initialized.

2) Driving the test:

The test is driven by providing input data to the SUT. It can be a single step or multiple steps. The input data should be generated in such a way that the SUT can read, understand, and respond.

3) Capturing the response:

The response from the SUT is captured and saved. Manipulation of the output data from the system may be required to extract the information that is relevant to the objective of the test case.

4) Determining the outcome:

The actual outcome is compared with the expected outcome. Predetermined decision rules are applied to evaluate any discrepancies between the actual outcome against the expected outcome and decide whether the test result is a pass or a fail. If a fail verdict is assigned to the test case, additional diagnostic information is needed. One must be careful in designing the rules for assigning a passed/failed verdict to a test case. A failed test procedure does not necessarily indicate a problem with the SUT – the problem could be a false positive. Similarly, a passed test procedure does not necessarily indicate that there is no problem with the SUT – the problem could be due to a false negative. The problems of false negative and false positive can occur due to several reasons, like setup errors, test procedure errors, test script logic errors, or user errors.

5) Logging the outcome:

A detailed record of the results is written in a log file. If the test case failed, additional diagnostic information is needed, such as environment information at the time of failure, which may be useful in reproducing the problem later.

6) Cleaning up:

A cleanup action includes steps to restore the SUT to its original state so that the next test case can be executed. The setup and cleanup steps within the test case need to be efficient in order to reduce the overhead of test execution.

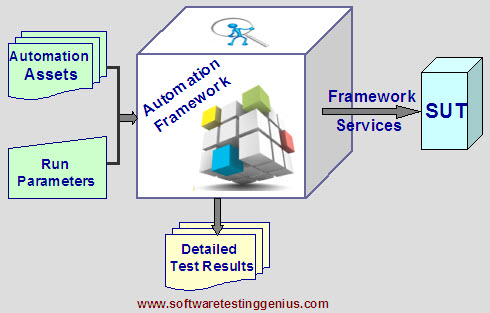

Typical components of a test automation framework:

A test automation framework, consists of test tools, equipment, test scripts, procedures, and people needed to make test automation efficient and effective. The creation and maintenance of a test automation framework are of fundamental importance for the success of any test automation project within an organization. The implementation of an automation framework generally requires a full fledged automation test group.

An automation framework primarily ensures:

1) Different test tools and equipment are coordinated to work together.

2) The library of the existing test case scripts can be reused for different test projects, thus minimizing the duplication of development effort.

3) Nobody creates test scripts in their own ways.

4) Consistency is maintained across test scripts.

5) The test suite automation process is coordinated such that it is available just in time for regression testing.

6) People understand their responsibilities in automated testing.

Many More articles on Test Automation Frameworks

An expert on R&D, Online Training and Publishing. He is M.Tech. (Honours) and is a part of the STG team since inception.