An Insight to Boundary Testing of Embedded Applications in our Software Testing Effort

What is the reason that testers anxiously look at the boundaries? Reason of this is that bugs very frequently appear while handling conditions at boundaries.

Boundary value testing is directed to the value on a boundary of an equivalence class. The boundary values require extra attention because defects are invariably present on the boundaries or quite near these. It’s very easy for a programmer to say < 100 instead of <= 100. For any particular boundary, we require minimum two cases for testing the condition of �On boundary� & the “out of boundary” condition. By selecting test cases that are based upon boundary

value analysis the software testing engineer insures that the test cases are effective.

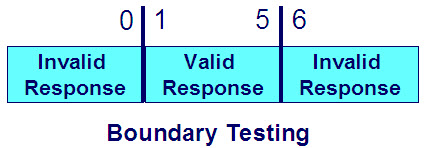

Since we can have both valid and invalid equivalence classes we can also have both valid and invalid boundary values, and both should be tested. Sometimes, however, the invalid ones can not be tested.

When we select boundary values for testing, we must select the boundary value and at least one value one unit inside the boundary in the equivalence class. This means that for each boundary we test two values.

In conventional testing it is recommended to choose a value one unit outside the equivalence class, hence testing three values for each boundary. Such a value is in fact a value on the border of the adjacent equivalence class, and some duplication could occur. But we can still choose to select three values; the choice between two or three values should be governed by a risk evaluation.

It is possible to measure the coverage of boundary values. The boundary value coverage is measured as a %age of boundary values which have been covered by the particular test.

When Boundary Value Testing comes into picture?

Boundary testing occurs when we test the extreme values of the input domain:

1) Maxima values

2) Minima values

3) Values just within the boundary

4) Values just outside the boundary

5) A variety of nominal values

6) Deliberate error values

Boundary testing is simple to understand but often difficult to implement. Regardless, we see no excuse for not pushing the product to its limits, especially at the boundaries.

Boundary Testing with Maximum and Minimum Values:

1) Lookup Table Ranges:

One way to introduce maxima / minima testing will involve a lookup table. If every input and output for the system has been defined, we just need to catalog these in a machine-readable table and the testing software is allowed to execute the appropriate values.

2) Analog and Digital Input Ranges:

The concept of electrical analog value testing is quite simple. The maximum and minimum expected signals are known, and we know that nominal values lie between these extremities. Some combination method or other approach can be used to stimulate these values.

Digital value testing can be interesting if we are able to stimulate the product with values that lie somewhere between electronic zero and electronic one – an indeterminate region. We are then looking for the reaction of the system to an indeterminate truth-value. A digital failure explains the reason we use watchdog timers to reset the system. Indeterminacy can lead to an apparent system “hang.”

3) Function Time-Out:

With some embedded systems, a timer with specific functions is introduced & which are supposed to time out. The testing puts the software testing engineers in a situation where the time-out mechanism can be challenged. One way to do this is to attack this particular function with an unexpected sequence of interrupts. If the clocking for the time-out in the function is not deterministic, a software failure can be expected. When such interrupts are used this way, we can force a high level of context-switching with the attendant time consumption required to save registers and stack frames and the subsequent return to the original state, only to be interrupted again.

4) Watch-Dog Performance:

A “watch dog” is used when we have an embedded software product. External watchdog timers must be reset periodically to keep them from resetting the entire system. Internal watchdog timers are generally known as “watch puppies” because they are not usually as robust as the external devices, although they function the same way. Basically, if the software does not reset the watchdog timer in time, it is assumed that the software has become too incoherent to function properly. Once the device software is allegedly stable, the watchdog timer is often turned off by mutual agreement between the developers and the customer.

5) Transaction Speed:

Transaction processing can be troubled with interrupts in much the same way like the function time-out capability. Interrupts always force software to become asynchronous, driving down predictability and causing unexpected problems. In most realistic cases, the testing will drive to bog down the processor enough that it begins to have trouble performing routine processing, which, in turn, becomes noticeable to the end user.

Strengths of Boundary Testing:

It is quite easy to ignore the testing the boundaries. Boundaries are present at all places in the software product. By introducing tests for testing the boundaries we can reduce or eliminate to a considerable extent those bugs found at edges which otherwise are liable to get missed out. Boundaries are present at all places in the software product, and are not just limited to the input values.

Weaknesses of Boundary Testing:

We have a drawback in our approach of boundary value analysis – That is the software testing engineers lay maximum focus on the edges at some cost of the product functionality. However in our entire testing effort, the testers need to maintain a sizable balance between the time spent and the risk being mitigated. Past statistical data can be extremely helpful in the decision making process like amount of time spent versus the efforts put in testing. En efficient boundary value analysis helps in catching the problem comfortably. Compared to this; like in equivalence partitioning, let us say that by moving either of the items from 1 to 99 would produce the same result. We always tend to select the lower values, with the common belief that it would have been a a better test, however we tend to miss the bugs at the boundary.

Many More Articles on Software Testing Approaches

An expert on R&D, Online Training and Publishing. He is M.Tech. (Honours) and is a part of the STG team since inception.